Digital Asset Management systems sit at the heart of most marcoms operations. They centralise content, organise it, and make it discoverable. Integrated with the wider MarTech stack, DAM support governance and drive efficiency. But video has changed the brief.

Video is no longer an occasional campaign asset. It is now the dominant content format across marketing, product, internal communications, and customer engagement. And as volumes grow, so do the operational pressures.

The issue is not whether your DAM can store video.

The issue is whether your teams can discover, reuse, adapt, and govern it efficiently at scale.

Because when discovery slows, reuse drops.

And when reuse drops, costs rise – often without anyone noticing.

Video Is ‘Just Another File’ – Until It Isn’t

At a basic level, a video file is simply another digital asset. A DAM can store it, catalogue it, and apply metadata to it. But video carries characteristics that fundamentally change how it needs to be managed:

- Format complexity. Video comes in a wide range of encoded formats (CODECs), each with different configurations – frame rates, encoding structures, audio arrangements. These aren’t cosmetic differences; they directly affect compatibility, quality, and approach to distribution.

- File size and accessibility. Professional video files are large and often not web-browser compatible. That makes previewing, streaming, and collaboration harder within systems designed primarily for static media.

- Time-based structure. Unlike images, video unfolds over time. Metadata doesn’t just apply to the whole asset – it applies to specific periods within it.

- Localisation and variants. Subtitles, audio stems, regulatory edits, regional variations – these are related and often interdependent components, not just new versions of the same file.

- Derivative creation. Social cutdowns, vertical edits, different durations – all need to maintain lineage back to the master asset to avoid duplication and rights infringements.

- Ongoing editing cycles. Video assets are routinely adapted long after creation or first publication. Their lifecycle is longer, dynamic and continuous.

And perhaps most importantly, Creatives and marketers are rarely searching for a file.

They are searching for a moment – a product shot, a quote, a scene, a reaction. That distinction is where traditional DAM models begin to strain.

Finding the Right Moment – Not Just the Right Asset

Metadata has always powered discovery inside DAM systems. This object-based metadata – be it campaign, product, spokesperson, usage rights – works well when assets are static.

But video exists in two dimensions:

- Catalogue metadata – information about the asset as a whole.

- Temporal metadata – information tied to specific time periods within the asset.

A tag might say “Product X is in this asset,” but it won’t say whether that appears in the first five seconds or the last thirty. It won’t tell you if the segment you want to use is already in use elsewhere, or the rights have expired. That lack of clarity increases risk and kills efficiency.

At a small operational scale, teams can compensate with knowledge (memory), spreadsheets, and manual review.

At enterprise scale – across regions, agencies, languages, and campaigns – that approach quickly breaks down.

When discovery doesn’t deliver, teams instinctively create their own workarounds: local edits, shared folders, private versions – bypassing the DAM because it doesn’t give them what they need when they need it. That behaviour isn’t just inefficient, it erodes governance, inflates production costs, and reduces RoI from existing content.ntent.

The Hidden Cost of “Making It Work”

Most modern DAM platforms support video in some form. Many do so capably within the limits of their original design. But “supporting” video often means adapting workflows around a model designed for static assets.

That adaptation typically looks like:

- Additional tools bolted on around the DAM

- Manual reformatting and distribution processes

- Workarounds for preview and playback

- Fragmented metadata across systems

- Disconnected rights tracking

Individually, these compromises feel manageable.

Collectively, they create friction — and friction increases exponentially as content volumes grow.

Managing Video Requires a Shift in Perspective

The real question isn’t: “Can our DAM store video?” It’s: “Are we managing video on its own terms?”

What’s emerging is not a rejection of DAM, but a more nuanced ecosystem:

- DAM remains essential for governance, brand control, and enterprise-wide visibility.

- Video-native systems handle time-based metadata, format complexity, version control, and high-volume processing.

- Integration ensures both operate cohesively rather than competitively.

Savvy teams are not looking for a monolithic “silver bullet.” They are rethinking their architecture so that each specialised system – DAM, video indexer, transcoder, rights engine – contributes a distinct capability. The task then becomes enabling systems to collaborate, not forcing one to do everything. That mindset separates high-performing teams from those stuck patching processes.

Lessons from Media & Entertainment

These challenges are not new. The Media & Entertainment sector has been solving them since the late 1990s through Media Asset Management (MAM) systems. For broadcasters’ manual processes were never viable.

Operating efficiently required:

- Structured, time-aware metadata

- High levels of automation

- Tight integration between production and business systems

- Clear orchestration across ingest, edit, versioning, and distribution

As corporate video demand begins to reach broadcast volumes, marcoms teams are encountering similar pressures, often without the infrastructure on which professional media organisations rely.

Automation Is No Longer Optional

With content demand chains growing exponentially, manual operations are becoming infeasible – even for mid-sized With marcoms content demand chains growing rapidly, manual operations are becoming infeasible – even for mid-sized teams.

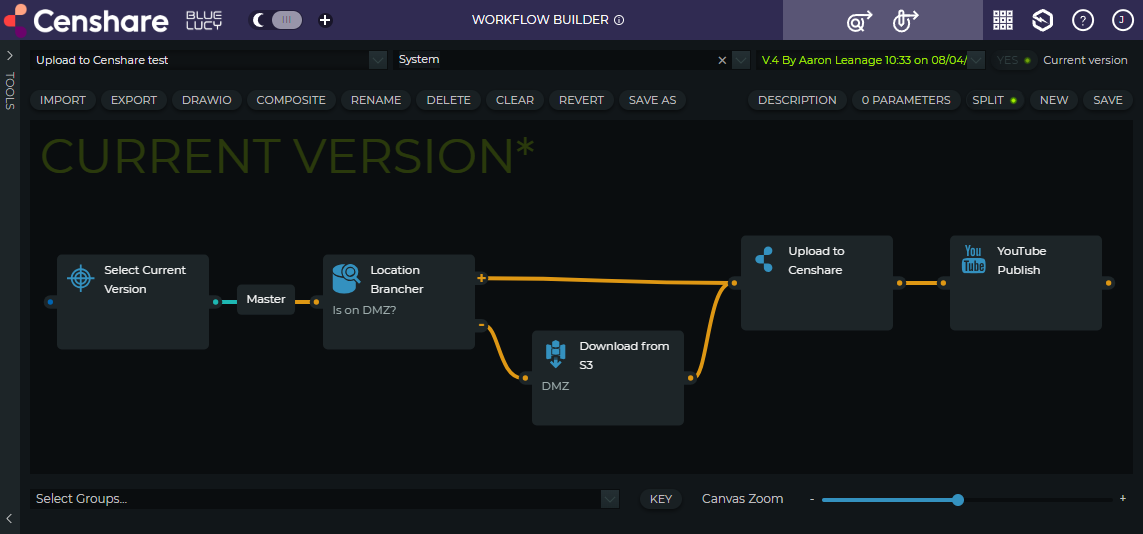

Video management at scale requires orchestration:

- Automated transcoding into multiple formats

- Structured version control

- Omni-channel distribution

- Integrated rights and compliance management

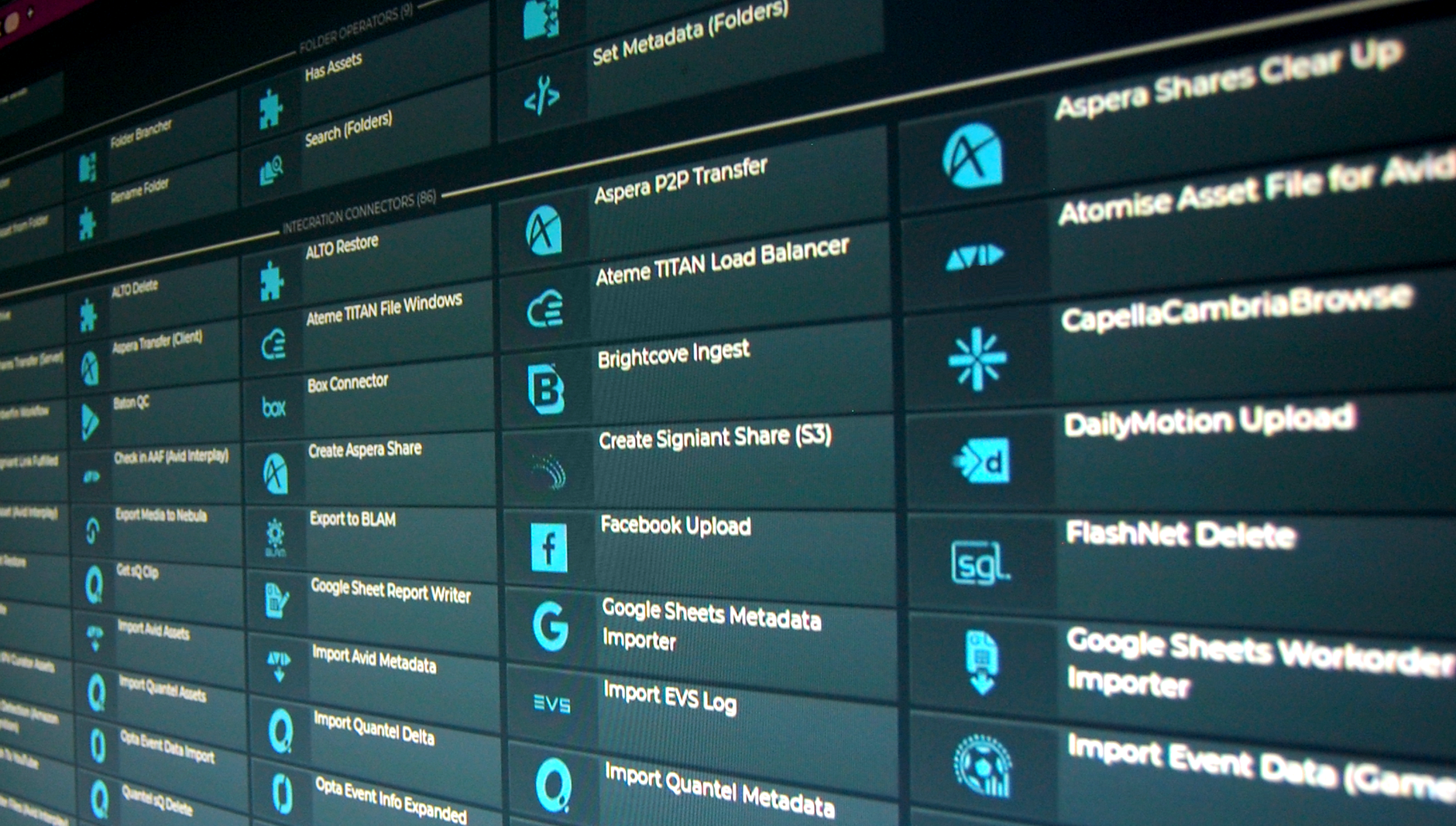

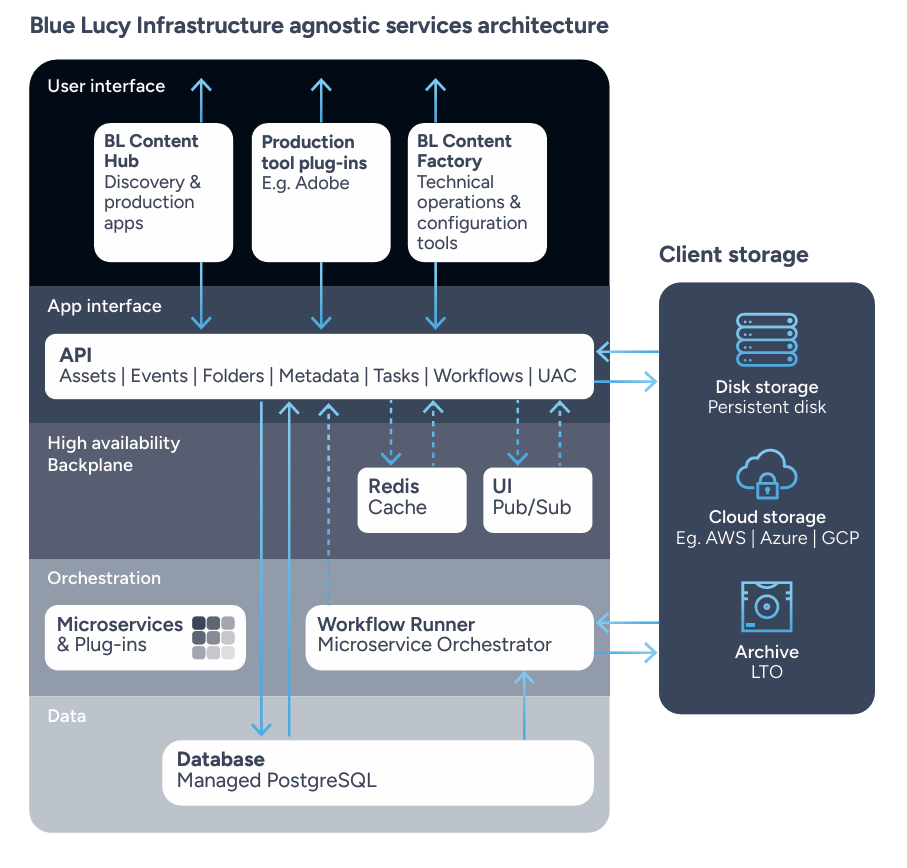

Adjacent to automation is the need to reduce friction between systems. Modern media systems such as the Blue Lucy platform are built with structured integration frameworks designed to connect production tools, DAMs, and business systems efficiently.

Because the real risk isn’t just storage capacity.

It’s operational complexity, and the erosion of value from content you’ve already invested in creating.

The Real Challenge

Video is now the dominant marketing medium. Storing it is easy. Managing it intelligently – discovering moments, reusing content, coordinating derivatives, and maintaining governance at scale – is the real challenge.

Organisations that recognise this shift early are building integrated, automated video operations designed for growth.

Those that continue adapting static systems to dynamic media will find the friction, and the cost, only increases over time.

LONDON, England – March 12th, 2026 – Blue Lucy will showcase its orchestration platform at NAB 2026 (Booth W2318), demonstrating how broadcasters and media companies can integrate multiple AI services into their production and content supply chain workflows while maintaining full governance, transparency, and control.

LONDON, England – March 12th, 2026 – Blue Lucy will showcase its orchestration platform at NAB 2026 (Booth W2318), demonstrating how broadcasters and media companies can integrate multiple AI services into their production and content supply chain workflows while maintaining full governance, transparency, and control.